Power Real-Time Intelligence and AI

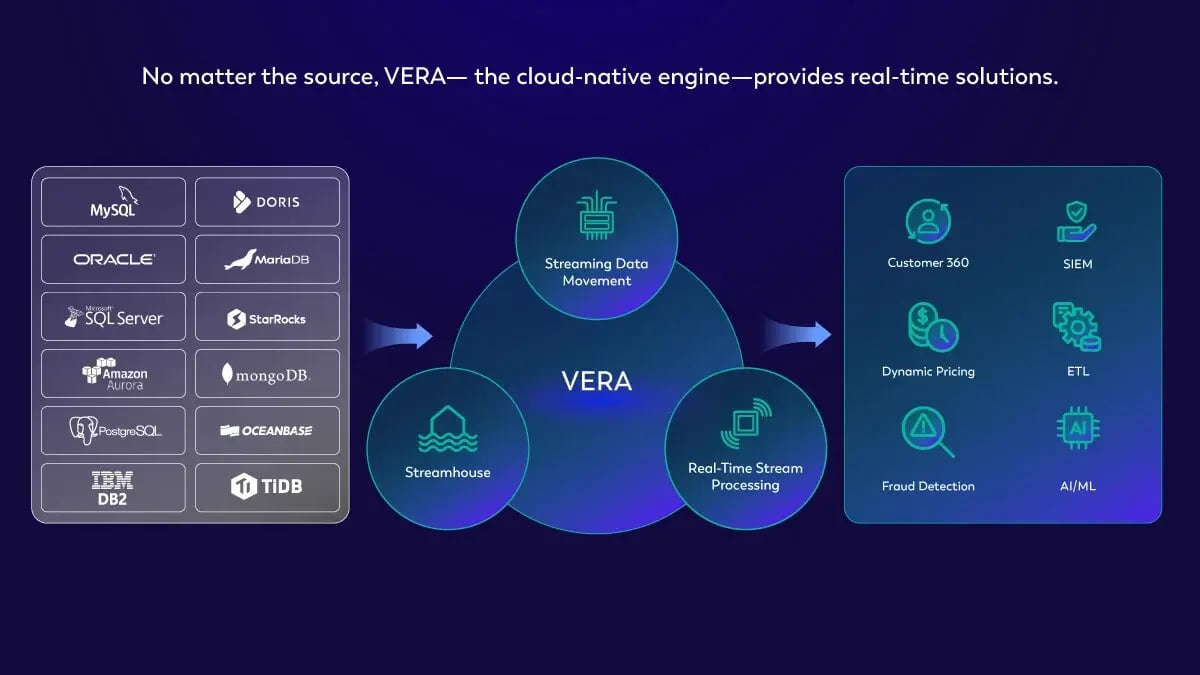

Meet the Unified Streaming Data Platform powered by VERA, the cloud-native engine built to revolutionize Apache Flink®.

Own Your Now

35 000 +

Jobs running on a single cluster

>10 Petabytes

Data ingested per day

>2 Million

Cores operated in an elastically scaling cluster

6.9 Billion

Records processed per second

10 Trillion

Records ingested per day

Brought to you by the original creators of Apache Flink®

Brands You Know Trust Ververica

Unified Streaming

Data Platform

Derive insights, make decisions, and take actions with data from any source.

Benefits of Ververica's Unified Streaming Data Platform

Developer Efficiency

Maximize performance and productivity while minimizing resource use with enterprise-grade, flexible tools

Operational Excellence

Focus on delivering business value rather than operating your streaming data environment

Security

Give the right people access to the right data at the right time

Data Governance

Get an organized, secure, and consistent view of your data

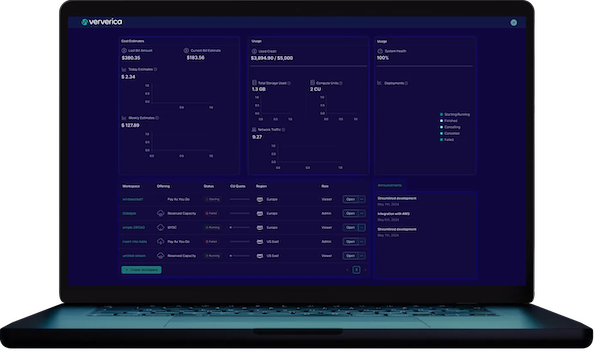

Multi-Tenancy

Isolate workspaces, separate production and test environments. Rapidly provision and de-provision resources on demand

Elasticity

Automatically and dynamically scale resources and storage up or down as needed, based on the current workload and capacity

Deploy Anywhere, Anytime

Deploy on-premise, on Ververica Cloud or get the best of both worlds with a zero-trust Bring Your Own Cloud (BYOC) deployment

Ververica Platform:

Self-Managed

Ververica Cloud:

Managed Service

Ververica Cloud:

Bring Your Own Cloud

Source Data & Events

Customer

Customer

Customer

Source Integration

Unified Streaming Data Platform

VERA Engine

Public Cloud

Customer

Private Cloud

Customer

Sink Integration

Destination Data

and Events Sink Integration

Customer

Customer

Customer

Brands You Know Trust Ververica

Booking.com, a leading travel ecosystem serving both partners and travelers, faced challenges with processing security data streams and orchestrating Flink applications with stateful upgrades and multi-tenancy requirements. Their previous approach proved cumbersome and inadequate for their needs.

Cash App, a provider of consumer financial services, highlights the transformative outcomes of integrating with Ververica’s software. With Ververica's solution, Cash App streamlined deployments, gained visibility into Flink jobs, and empowered teams with customizable features.

Ready to watch a demo or talk to a live expert?

The Ververica Team is here to help.

Latest News

Your AI Coding Assistant Can't Touch Your Streaming Platform. Until Now

Introducing Ververica’s Model Context Protocol (MCP) Server (Preview): Native Large Language Models... April 2, 2026 by Vladimir Jandreski

Zero Trust Theater and Why Most Streaming Platforms Are Pretenders

“Zero Trust” used to mean something. It described a foundational security model where nothing... February 26, 2026 by Ben Gamble

No False Trade-Offs: Introducing Ververica Bring Your Own Cloud for Microsoft Azure

Azure customers demand it. In 2026, Ververica delivers. Ververica’s Unified Streaming Data... February 19, 2026 by Serhat Yanıkoğlu and Karin LandersAdditional Resources

Pricing

Ververica's Unified Streaming Data Platform pricing and support details for each deployment.

See PricingVerverica Academy

A comprehensive learning platform that offers a wide range of courses and educational resources.

Learn Apache FlinkVerverica Documentation

Your go-to resource for getting the most out of Ververica's Unified Streaming Data Platform.

Read DocsLet’s Talk

Ververica's Unified Streaming Data Platform helps organizations to create more value from their data, faster than ever. Generally, our customers are up and running in days and immediately start to see positive impact.

Once you submit this form, we will get in touch with you and arrange a follow-up call to demonstrate how our Platform can solve your particular use case.